Video is the element most often associated with the term multimedia, and also widely touted as the ‘bandwidth cruncher’. Applications, such as desktop video teleconferencing and Internet radio/TV, require that sound (e.g., voice, music) and moving images (e.g., video, animation) be sent in real time. In order to preserve the appearance of continuous sound or image, such applications are delay-intolerant, providing real challenges for network equipment developers and network designers. For network infrastructures, the medium simply must carry data at an adequate rate.

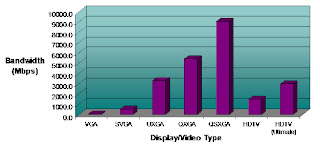

Digital video appears on a screen in a digital format made up of many individual dots or pixels. To digitally display true, photo-realistic color, each pixel requires three bytes of data – one each to describe the red, green, and blue (RGB) color components of each pixel. Using the example of a UXGA display showing full screen video that is uncompressed, it would require 5,760,000 bytes (1600 pixels x 1200 pixels x 3 bytes/pixel) of information to display one frame of video.

The perception of motion occurs when a series of frames are displayed in rapid succession known as the refresh rate. For example, standard analog television displays use a refresh rate of 30 frames per second (25 frames per second in many parts of the world). Higher resolution applications require refresh rates more than double that, in the order of 72 frames per second. To achieve this digitally uncompressed over a data network would require a data throughput of 8 bits/byte x 5,760,000 bytes/frame x 72 frames/second = 3.3 Gb/s.

At 3.3 Gb/s, it is clear that constant digital video traffic would rapidly cause most networks to struggle due to congestion, especially if document sharing and text/image file transfer are added and occurring at the same time in real-time. Gigabit networking is fully justified for these applications.

In reality, most video is transmitted compressed using standards based encoding schemes such as MPEG2 (Motion Picture Entertainment Group) that require a fraction of the digital information required for uncompressed, but there are a growing number of applications such as medical CAT scans, X-Rays and entertainment formats that require more uncompressed capability. Data compression techniques reduce the bandwidth requirements by an order of magnitude but the degree of compression that is acceptable depends on the quality desired for the sound or image. Various algorithms are available that allow developers and end users to tune the degree of compression to an application’s requirements, but there is always a cost in terms of quality. The other penalty is latency – sophisticated compression schemes increase latency that can be burdensome for real-time 2-way video interactions.

But this is theory, what is the reality.

What are your experiences with deploying and/or using video on networks and devices whether they be wired or wireless?